- CFO Playbook

- Posts

- AI as your Coworker – First Legal Assessment

AI as your Coworker – First Legal Assessment

We’re Ellen and Simone. After 36 years in finance, we’re ready to share what textbooks won’t tell you.

💛 Welcome to CFO Playbook – your practical finance insights delivered bi-weekly. The full read will take approximately 5 minutes. Like what you see? Share it! Use the button below.

READ OF THE WEEK

AI is landing in real finance workflows – but what does that mean legally?

We spoke with Dr. Benedikt Flöter, Partner at YPOG and one of Germany’s leading AI & Emerging Technologies lawyers, about compliance, data privacy, and governance for CFOs.

In this Read of the Week

1. Your team is already using AI – the question is how

2. Financial data & GDPR – what’s actually at risk?

3. Shadow AI, IT Security & the talent problem

4. Governance, AI policies & practical next steps

5. Liability, compliance & falling behind

1. Your team is already using AI – the question is how

More and more finance teams are buying proper AI licenses – Claude Team, Enterprise, ChatGPT Business – with admin controls, security features, and no training on your data. Good. But a license alone doesn’t solve the legal picture.

The real risk: if your company doesn’t provide approved tools, people don’t stop using AI. They switch to personal accounts. Free versions. No data protection. Everything potentially feeding into model training:

Shadow AI is a growing risk.

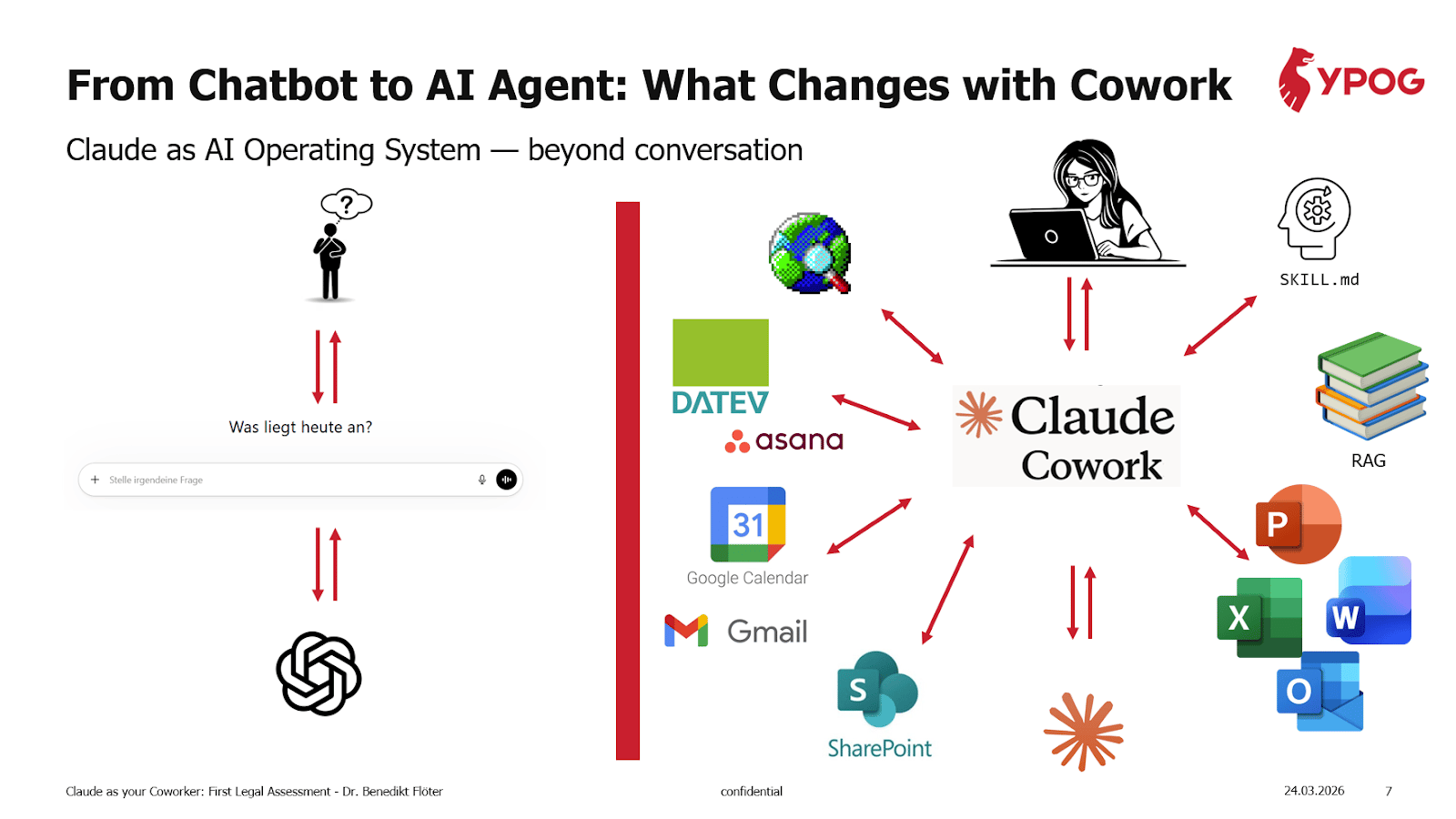

And even with the right license, there’s a big legal shift happening. Classic AI chat is low risk.

Agentic AI is a different game:

Classic chat (ChatGPT, Claude.ai, Copilot):

One prompt → one answer, text only

No access to files or systems

User always in control

Risk: low

Agentic AI (Claude Cowork, Copilot Agents):

Describe outcome → agent executes autonomously

Direct access to files, email, Slack, ERP via MCP

Scheduled tasks run unattended

Risk: high – real actions, real consequences

Worth noting: Cowork is still in “Research Preview.” Anthropic’s own documentation states that Cowork activity isn’t captured in audit logs or compliance exports, and explicitly warns against using it for regulated workloads. That doesn’t mean you can’t use Claude – it means the agentic desktop tool needs extra caution until Anthropic closes these gaps.

💡 The starting checklist:

(1) Give your team a company-approved AI license with data protection built in. (2) Understand which AI features (chat vs. agents) your team actually uses – and what that means for GDPR, liability, and governance. (3) Don’t wait for the perfect setup. The risk of doing nothing is that your team is already using AI without any guardrails at all.

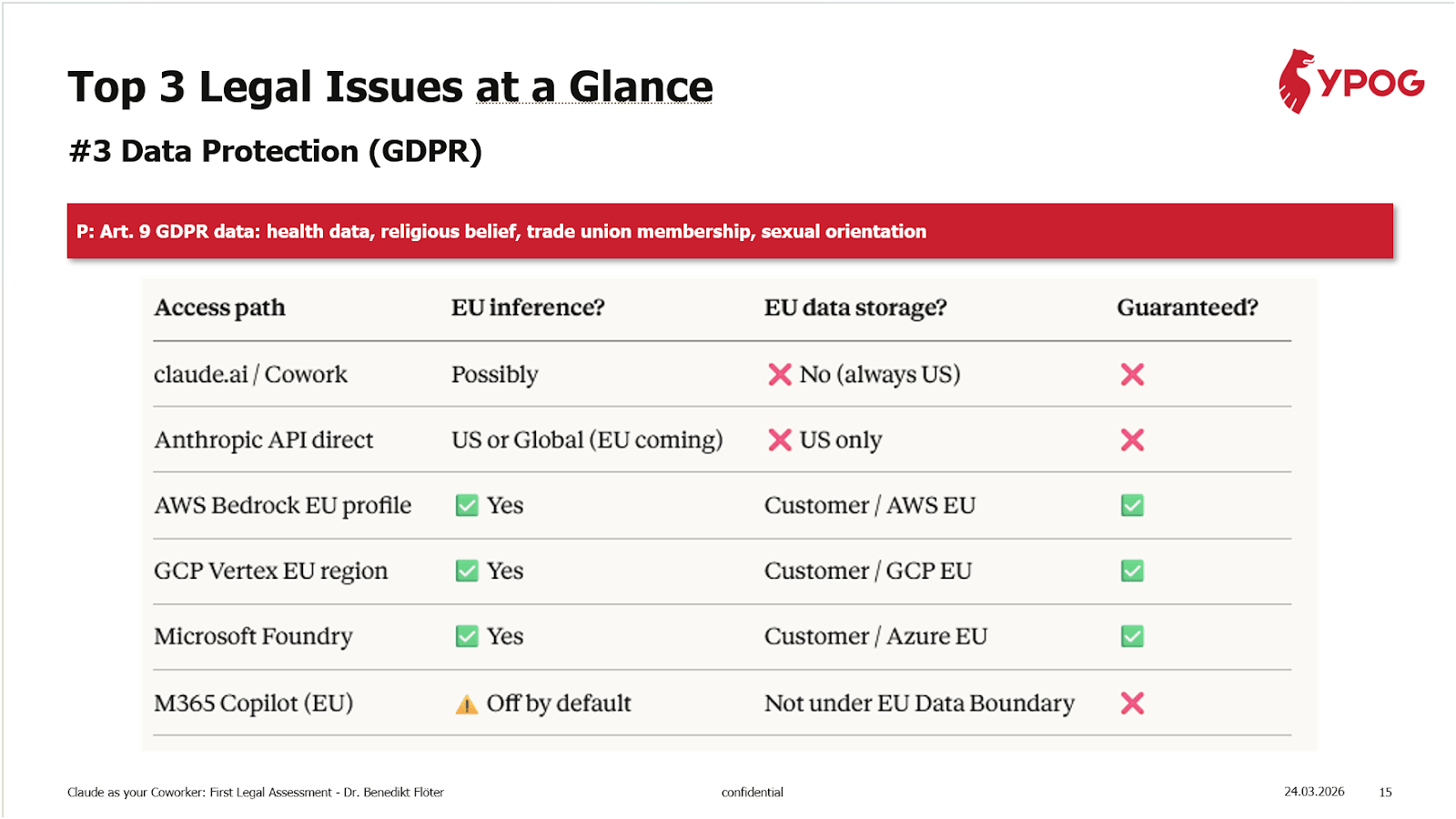

2. Financial data & GDPR – what’s actually at risk?

Finance teams handle some of the most sensitive data in any company – revenue, salaries, investor information, forecasts. The key question: where does that data go when you use AI?

The good news: GDPR compliance with AI tools is solvable – it's the most manageable of the three legal risks (see pyramid in section 5). But you need the right setup.

If your team uses claude.ai or Cowork directly, data is stored in the US – no EU hosting guarantee. Same for the Anthropic API today. Under GDPR, this triggers additional requirements:

Data Processing Agreements (DPAs)

Standard Contractual Clauses (SCCs)

Data Protection Impact Assessments (DPIAs)

Transfer Impact Assessments (TIAs)

This isn't theoretical. In 2023, Meta was fined €1.2 billion – still the largest GDPR fine ever – for transferring EU user data to the US without adequate safeguards. The case showed that US laws like the CLOUD Act can give authorities access to data stored by US companies, and that Standard Contractual Clauses alone may not be enough.

Using Claude for financial analysis, modeling, or reporting with aggregated company data is generally low risk. The issues start with personal data:

A practical example: Your finance team uses Claude to analyze employee cost data across entities. If that includes names and salaries, you're processing personal data – which needs a robust GDPR setup before it runs through a US-hosted tool. Even more critical: if you're tracking health-related absences, that's special category data under Art. 9 GDPR. Running that through a US-hosted tool without safeguards is a high compliance risk.

💡Enterprise and Team plans contractually exclude AI training – no toggle needed. On Pro, you can opt out in settings, but it's a preference, not a contract. That distinction matters for GDPR.

3. Shadow AI, IT Security & the talent problem

The numbers are clear: 97% of organizations that had AI-related security incidents didn't have proper access controls in place. Only 30% of CISOs (Chief Information Security Officers) say they have mature safeguards – even though 73% consider AI agent risks a critical concern.

With AI agents, the risk gets worse. Employees connect tools to email, calendars, and file systems without IT oversight. They schedule automated tasks that run without anyone checking the output. And when something goes wrong, there's often no log to trace what happened or why.

Then there's the talent side. Top finance professionals increasingly see AI skills as essential for their careers. If your company blocks access to AI tools, you're not just creating a compliance gap – you're pushing good people toward competitors who let them use these tools every day.

💡 Provide approved, governed tools across the whole org. With clear policies. That’s safer than pretending people won’t use AI.

4) Governance, AI policies & practical next steps

To start with, every company needs a written AI Policy. If an agent sends a wrong email or leaks data, the first question is: did you have rules in place?

What the policy should cover:

Permitted and prohibited use cases

Blacklisted input data

Agent rules

Human-in-the-loop for anything that leaves the company

Technical guardrails:

Only approved connectors and integrations

Dedicated folders for Claude – not your entire file system

Sandbox for testing before production

After rollout: document each use case with a "Package Leaflet" – who owns it, what data it touches, what can go wrong, how you mitigate. Review supplier agreements for AI agent access. Update customer contracts with AI and liability clauses.

For structured rollouts, YPOG has developed a 5-week implementation matrix covering Governance, IP, Data Protection, and IT Security.

💡 No written AI policy = no defence. If an AI agent leaks data or sends a wrong email, the first question regulators and courts will ask: did you have rules in place?

5. Liability, compliance & falling behind

The real tension: legal compliance vs. competitors moving fast with AI. Block it and you fall behind. Rush it and you create liability.

The risks are concrete – and not equally serious. EU law wasn't designed for AI agents.

Agents can send emails, create orders, disclose trade secrets – the company is liable. Content created with AI might expose you to IP infringement claims. The more agents you use, the higher the risk of uncontrolled liability.

But doing nothing isn’t safe either.

In an exclusive Expert Session for Finance Collective DACH, Anthropic's own finance team walked us through how they use Claude every day – in real workflows, not pilots:

SOX documentation drafts in under an hour instead of months

Procurement reporting automated from 15+ hours to a single prompt

Statutory reporting generated consistently across multiple entities

Finance tickets handled at 70% automation with ~30 second response times

These aren't pilots – they're live workflows.

Bottom Line

The legal framework for AI in finance isn’t settled.

But waiting for clarity isn’t an option.

Start with practical governance: written policies, clear data boundaries, approved tools, human-in-the-loop as default.

The biggest risk isn’t adopting AI. It’s not having a plan when your team is already using it.

🔎 CFO Watchlist

SEC Considers Ending Quarterly Earnings Reporting The SEC is preparing a proposal – expected as soon as April 2026 – that would allow public companies to report semiannually instead of quarterly. If adopted, it's the biggest change to public company reporting in decades. CFOs are already debating the implications for investor relations, board oversight, and audit cycles. 👉 Fortune

Why CFO Peer Networks Matter More Than Ever - AI governance, compliance, liability – these topics are too new for textbooks and too complex for one team alone. The read confirms what we see in our community every week: finance leaders who invest in peer exchange navigate these challenges faster and with fewer mistakes. 👉 CFO.com

🌐 Finance Collective

Where 170+ senior finance leaders exchange on what actually works

Join a curated network for:

|

|

CLOSING REMARKS

Thanks for reading 💛

Send your feedback, suggestions, or requests to feature something in future editions to [email protected]. We’d love to include your input.

Follow us on LinkedIn for more updates!

CFO Playbook reflects our personal opinions, not professional advice.